There’s a shift happening in how people find information online, and it’s moving faster than most businesses have adjusted to.

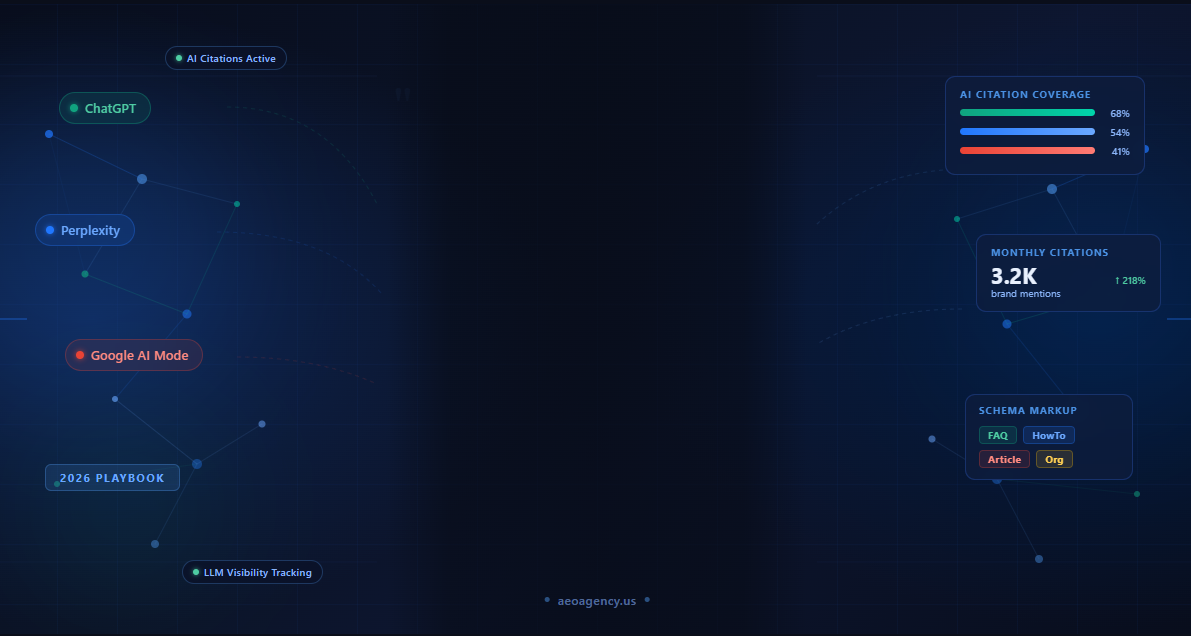

A growing number of searches, especially research-heavy, decision-stage queries, are now happening inside ChatGPT, Perplexity, and Google’s AI mode rather than on a traditional search results page. People type full questions. An AI responds with a synthesized answer. Sometimes it cites sources. Often it doesn’t. This shift is one reason demand is rising for a ranking service for ChatGPT visibility and other AI search optimization solutions.

If your business is one of those cited sources, you get the visibility. If it isn’t, you’re invisible even if you rank well on traditional search.

This guide is about changing that. We’re going to break down how LLMs (large language models) actually decide what to cite, what signals they respond to, and what you can do right now, platform by platform, to give yourself a real chance of showing up in those answers.

No vague advice. No generic “create high-quality content” platitudes. Just the actual mechanics and the actual tactics.

How LLMs Actually Decide What to Cite: The RAG Pipeline Explained

Most people assume AI tools like ChatGPT or Perplexity are pulling from some magical database of knowledge. The reality is more interesting, and understanding it changes how you approach everything else in this guide.

Most modern AI search tools use something called “RAG,” retrieval-augmented generation. Here’s what that means without the jargon:

When you ask Perplexity, “What’s the best way to optimize for AI search?” it doesn’t just pull from its training data. It first runs a live web search (or searches a curated index) and retrieves a set of relevant web pages, then uses its language model to synthesize those pages into a coherent answer. The sources it pulls from are the ones it ends up citing.

So the question of “how do I get cited by AI” is really two separate questions:

1. Can the AI find and access my content?

Your pages need to be indexed, crawlable, and formatted in a way that a machine can parse quickly. If your content is locked behind JavaScript rendering issues, has thin or blocked pages, or loads too slowly to be reliably retrieved, it gets skipped.

2. Does the AI trust and prefer my content over alternatives?

Once the AI retrieves a set of candidate pages, it has to decide which ones to use. It favors content that directly answers the query, uses specific and verifiable language, and comes from sources with credibility signals: backlinks, author information, and mentions on trusted third-party sites.

The RAG pipeline is why schema markup matters, why answer-first formatting matters, and why third-party mentions matter. Each of those things improves either discoverability or trustworthiness, the two gates a piece of content has to pass through before it gets cited.

One more important nuance: different platforms use slightly different retrieval methods. ChatGPT’s browsing mode, Perplexity’s index, and Google AI Mode all have their own weighting. We’ll cover each one separately later in this guide.

Get a free website audit.

The Six Content Signals Every LLM Looks for Before Citing a Source

Before getting platform-specific, here are the signals that consistently influence citation behavior across all major AI tools. Think of these as the universal requirements, the things that apply whether you’re trying to show up in ChatGPT or Google AI mode.

1. Direct, specific answers to the query

This is the most important one. LLMs are looking for content that answers the exact question being asked, stated clearly and early in the content. Vague, general, or overly hedged answers don’t get selected. If someone asks “how long does LLM SEO take to show results,” the AI wants a page that answers that specific question, not a page that talks about AI search in general.

Answer-first formatting, where the direct answer comes in the first 40 to 60 words of each section, is the single most effective structural choice you can make for LLM citation.

2. Factual specificity and verifiability

LLMs are trained to prefer content that makes specific, checkable claims. “Most businesses see AI citation results within 6 to 12 weeks” is stronger than “results take some time.” Citing real data, real studies, and real figures with clear attribution signals that your content is reliable enough to be repeated.

3. Authoritativeness signals in the content itself

Author bylines, credentials, and about pages are read by the systems that index content. A page with no author, no organization, and no external references looks less trustworthy than a page that clearly identifies who wrote it and why they would know.

4. Topical depth and internal coverage

A single well-written page on a topic is less likely to be cited than a site that has multiple interlinked pages covering that topic from different angles. AI tools read topical signals. A website that clearly specializes in LLM SEO with a pillar page, cluster articles, and an FAQ page all linking together reads as more authoritative than a site with one stray article on the topic.

5. Clean technical structure

Fast-loading pages, mobile-friendly design, proper heading hierarchy (H1 > H2 > H3), and accessible content without heavy JavaScript rendering all make it easier for AI retrieval systems to parse your page accurately. Technical issues don’t just hurt SEO; they actively impede the retrieval step in the RAG pipeline.

6. Off-page trust signals

Third parties on sites that AI tools already trust are a powerful citation signal. If reputable publications, industry directories, and credible blogs reference your content or your brand, that web of external references builds your credibility with the systems that decide what to cite.

How to Optimize for ChatGPT Citations Specifically: ChatGPT SEO and LLM Citation Strategy

ChatGPT’s citation behavior has evolved significantly since OpenAI introduced web browsing capabilities. Here’s how it currently works and what you should be doing about it.

How ChatGPT retrieves information:

ChatGPT with browsing enabled (available in GPT-4o and certain GPT-4 configurations) runs real-time web searches using Bing’s index. This means two things: your content needs to be indexed by Bing, and it needs to perform well by Bing’s quality signals, which are similar to but not identical to Google’s.

Check your Bing Webmaster Tools account (it’s free) and verify that your key pages are indexed. If they’re not, submit your sitemap there just as you would in Google Search Console.

What ChatGPT prefers:

Based on observed citation patterns across thousands of queries, ChatGPT tends to favor:

- Pages with clear question-and-answer structure

- Content that uses numbered lists or step-by-step formatting for process-based questions

- Pages from sites with established authority on the topic (high Bing domain trust)

- Content that references real data, real studies, or named sources

- Shorter, self-contained answers rather than long meandering paragraphs

One practical tactic: structure your key pages so that each major section begins with a direct question as the heading (H2 or H3), followed immediately by a 2- to 4-sentence direct answer before any elaboration. ChatGPT’s retrieval system can extract that block and use it cleanly.

The Bing connection:

Because ChatGPT uses Bing’s index, your Bing optimization matters here. Make sure your site is submitted to Bing Webmaster Tools, your sitemap is up to date, and your pages pass basic quality checks. Bing gives slightly more weight to on-page content freshness than Google, so keeping pages updated with accurate dates is particularly useful.

On llms.txt:

OpenAI has indicated interest in the emerging llms.txt standard (covered in depth in Article 6 of this hub). Adding an llms.txt file to your site is a forward-facing signal of cooperation with AI crawlers that may influence ChatGPT’s willingness to surface your content.

How to Optimize for Perplexity Citations: Specifically Perplexity AI SEO Strategy

Perplexity is the platform that most serious AEO practitioners pay the closest attention to, partly because it shows its sources transparently and partly because it has a strong reputation for research-quality answers that attract high-intent users.

How Perplexity retrieves information:

Perplexity runs its own web index alongside real-time retrieval. It also uses multiple underlying models depending on the query type and the user’s chosen mode (quick, deep research, etc.). In all modes, it fetches and synthesizes web content, then cites the sources it drew from.

A significant difference from ChatGPT: Perplexity surfaces its citations prominently and in real time. Users can see exactly which pages the answer came from. This makes being cited in Perplexity particularly valuable for brand awareness; users see your site name attached to a credible answer.

What Perplexity prefers:

Perplexity has a noticeable preference for:

- Pages that contain specific, factual information with named sources or data points

- Content that is well-structured with clear headings and numbered sections for multi-part answers

- Sites with strong backlink profiles and external mentions (it correlates closely with domain authority)

- Recently updated content Perplexity appears to weight freshness more heavily than ChatGPT for time-sensitive queries

- FAQ-style pages and comparison articles (these get pulled especially often for “X vs Y” and “how to” queries)

Practical tactics for perplexity:

First, identify which of your pages are currently being cited by running your target queries in Perplexity manually. Screenshot the results. Note which domains are appearing consistently; those are your citation competitors for each query.

Second, look at what those cited pages have that yours don’t. Is it more specific data? A cleaner structure? More external references? Matching that gap is your fastest path to displacement.

Third, build external references to your content. Perplexity’s citation algorithm is heavily influenced by who else on the web treats your content as a credible source. Getting your pages linked by industry publications, listed in reputable directories, or referenced in roundup articles significantly increases your Perplexity citation rate.

The “Deep Research” factor:

Perplexity’s Deep Research mode, which runs longer multi-step research sessions, tends to pull from a wider range of sources and favors comprehensive, well-organized content over short snippets. If your audience uses deep research for buying decisions or vendor comparisons, make sure your key landing and comparison pages are thorough, well-sourced, and clearly structured.

How to Optimize for Google AI Mode and AI Overviews Specifically, Google AI Search Optimization

Google AI Mode and AI Overviews represent the highest-traffic opportunity in the LLM SEO space right now, simply because Google processes far more queries than any other platform. Being cited in Google’s AI-generated answers reaches more people than any other AI citation.

AI Overviews vs. Google AI Mode:

These are related but distinct features. AI Overviews (formerly Search Generative Experience) appear as an AI-generated summary at the top of standard search results for certain queries. Google AI Mode is a dedicated AI search interface that users opt into, designed for more conversational, multi-step research sessions.

Both draw from Google’s web index, and both are influenced by traditional Google ranking signals more heavily than Perplexity or ChatGPT.

What Google AI uses to decide who to cite:

This is the most well-documented of the three platforms because it builds most directly on Google’s existing quality framework. Key factors:

- E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness): Google’s AI systems inherit the same quality signals Google’s ranking systems use. Pages from sites with strong author credentials, consistent accurate information, and good reputational signals get preferred.

- Featured snippet eligibility: There is strong evidence that pages that already appear in featured snippets are prioritized for AI Overview citations. Optimizing for featured snippets by providing concise, direct answers in the 40 to 60 word range is effective for both.

- Structured data: Google’s AI Mode reads schema markup (FAQ, HowTo, Article) explicitly and uses it to parse your content more accurately. Pages with proper schema are more reliably parsed and more likely to be cited.

- Content freshness: Google AI Mode shows a preference for recently updated content, particularly for queries where information changes quickly (industry data, pricing, tools, research).

Practical tactics for Google AI:

Make sure your most important pages have been updated in the last 6 months. A quick refresh, adding new data, updating statistics, and adding a section addressing a new angle on the topic count as a meaningful update and reset freshness signals.

Implement FAQ schema on any page where you’ve included a question-and-answer section. Google reads this directly, and it significantly improves your chances of being pulled for relevant queries.

Target featured snippets deliberately. For your most important questions, write a clean 50-word paragraph that directly answers the question, without preamble. Put it at the top of the relevant section. Test whether you’re earning the snippet by searching the query in Google. Featured snippet presence strongly correlates with AI Overview citation.

Finally, your Google Business Profile matters here for local and brand queries. An optimized, active, complete GBP gives Google more data to work with when generating AI answers about your business specifically.

Technical Signals That Help LLMs Find and Trust Your Content, Schema, Structured Data, and llms.txt

On the technical side, there are several things you can do to make your content more readable, more parseable, and more trustworthy to AI retrieval systems.

Schema markup (JSON-LD):

The most impactful schema types for LLM citation are FAQ, HowTo, Article, and Organization schemas.

- FAQ schema marks up question-and-answer pairs so AI systems can extract them directly. Add this wherever you have Q&A sections.

- HowTo schema marks up step-by-step processes. Particularly effective for instructional content.

- Article schema tells machines your publication date, author, and headline. Keep dates accurate and current.

- The organization schema on your homepage and About page tells AI systems your official business name, address, website, and social profiles. This is especially important for brand-level queries in GEO.

Use Google’s Rich Results Test to validate your schema after adding it. Fix any errors before expecting results.

Heading hierarchy:

Your heading structure (H1 as the page title, H2s as main sections, H3s as subsections) is one of the primary tools AI retrieval systems use to understand the structure of your content. Keep it clean and logical. Every H2 should clearly signal what the section covers. AI tools often extract headings as a structural map before reading the content under them.

Page speed and crawlability:

A page that takes 5 seconds to load or that requires JavaScript execution to display its content may be partially or fully inaccessible to AI crawlers. Run your key pages through PageSpeed Insights (free from Google) and address any critical issues. Make sure your content is accessible in raw HTML, not locked behind client-side rendering.

llms.txt:

This is a newer file format that you can add to your website’s root directory to tell AI systems what your site is about and which content you want them to prioritize. Think of it as a communication channel specifically for AI crawlers. We cover this in full in Article 6 of this hub (the dedicated llms.txt guide), but the short version is add one, keep it updated, and include clear descriptions of your most important content.

Off-Page Signals: Third-Party Mentions, Reviews, and Press Coverage for LLM Citation

Off-page signals are often overlooked in AEO discussions because they feel harder to control than on-page content. But they may be the single most important factor in whether AI tools trust and cite you, especially in competitive categories.

Here’s why: LLMs are trained on large datasets of web content. The sites and sources they were trained on, major publications, trusted directories, and well-known industry sites carry more weight in their understanding of what’s credible. If those trusted sources mention your brand or link to your content, AI tools are far more likely to consider you a citable source.

What to focus on:

Industry publication mentions. Getting featured, quoted, or referenced in publications that cover your industry is high-value AEO work. This isn’t just about link-building for SEO; it’s about building the web of references that AI tools use to determine your credibility.

Reviews on trusted platforms. Google Reviews, G2, Trustpilot, and Clutch are all indexed and trusted sources. Having a strong, specific review presence on these platforms means that when an AI is trying to determine your brand’s reputation, it has verified third-party data to pull from.

Directory listings and citations. For local and service businesses, being listed accurately on directories like Yelp, BBB, and industry-specific directories creates a consistent citation footprint that AI tools cross-reference when building their understanding of your business.

Guest contributions. Writing for established publications in your space, even without a link, builds your author footprint. When your name appears on credible third-party sites, AI tools that encounter your own site’s content have more external confirmation that you’re a legitimate expert.

HARO and journalist connections. Being quoted as an expert in news articles creates exactly the kind of credible third-party mention that LLMs are trained to respect. Set up alerts for relevant topics and respond consistently to journalist queries.

The underlying logic is simple: AI tools don’t inherently trust any site. They calibrate trust based on signals, and one of the strongest signals is “other credible sources vouch for this one.” Build those external references, and your citation rate will follow.

How to Measure Whether You Are Being Cited by AI Tools LLM Citation Tracking Methods

Measurement is the honest challenge of LLM SEO. The tracking infrastructure isn’t as clean as Google Search Console, and it probably won’t be for a while. But you can absolutely measure this; it just requires a different approach.

Manual prompt testing (free and accurate):

Go back to your prompt audit list. Run every target prompt in ChatGPT, Perplexity, and Google AI modes. Record the results. Note which sources are cited for each query and whether your site appears. Do this once a month and track changes over time in a simple spreadsheet.

Yes, this takes time. But it also gives you the most accurate picture of what’s actually happening, because you’re testing the exact tools your audience uses with the exact questions they ask.

SE Ranking’s AI Overview tracker:

SE Ranking has built AI Overview detection into its rank tracking tool. You can set up tracking for specific queries and see whether your site appears in an AI Overview for those queries over time. This is the most automated way to track Google AI Overview citations without manual testing.

Semrush’s AI tracking features:

Semrush has been adding AI visibility features to its toolkit. Check the current state of these features in your Semrush account, as they’ve been updating rapidly through 2025 and 2026.

Brandwatch, Brand24, and Mention:

These brand monitoring tools pick up AI-generated content that gets published or surfaced online, and they can alert you when your brand name or website appears in AI-generated contexts. Not perfect, but useful as a supplementary signal.

Search Console indirect signals:

When AI tools cite your content and users then search your brand name to find your site directly, you’ll see increased branded search volume in Search Console. A rising branded search trend following AEO work is a meaningful indirect indicator that AI is surfacing you.

What to track in your monthly report:

- How many target prompts cite your site (and which platforms)

- Which pages are being cited

- What changed in the month prior (content updates, new pages, external mentions)

- Search Console branded query trend

Over three to six months, you’ll see clear patterns about what’s working and what isn’t.

A 30-Day LLM Visibility Action Plan: Getting Started with LLM SEO This Month

Here’s a realistic 30-day plan for a business that has decent SEO foundations and wants to start building an AI citation presence.

Week 1: Audit and prioritize

- Run a prompt audit. Spend 2 to 3 hours in ChatGPT, Perplexity, and Google AI Mode typing every question your audience might ask. Record who’s being cited.

- Identify your 5 highest-priority pages, the ones that answer the questions you most want to own.

- Check those pages in Google Search Console. Are they indexed? Are they getting impressions for relevant queries? Note current performance.

- Run those pages through PageSpeed Insights and fix any critical speed issues.

Week 2: On-page content restructuring

- Rewrite the opening section of each priority page to lead with a direct answer in the first 50 words.

- Add or improve H2 headings on each page to be question-formatted where relevant.

- Add a Q&A section to each priority page with 5 to 8 well-answered questions (phrased the way your audience actually asks them in AI tools).

- Verify all author bylines and publish dates are accurate and visible.

Week 3: Schema and technical work

- Add FAQ schema to your Q&A sections (use Rank Math or Yoast if on WordPress, or add JSON-LD manually).

- Add or update the article schema on your key blog posts.

- Add an organization schema to your homepage if you don’t have it already.

- Create and upload a basic llms.txt file to your site root (see Article 6 of this hub for the full guide).

- Submit your sitemap to Bing Webmaster Tools if you haven’t already.

Week 4: Off-page and monitoring setup

- Identify 3 industry publications you could realistically get a mention in and reach out with a guest post pitch, expert quote request, or HARO response.

- Audit your Google Business Profile and update any incomplete sections.

- Set up SE Ranking or Semrush AI tracking for your 10 most important queries.

- Run a second full manual prompt test to compare against the Week 1 baseline.

- Set up a monthly calendar reminder for ongoing prompt testing.

After 30 days you won’t have full results; that takes 2 to 3 months. But you’ll have a clean baseline, proper technical foundations, improved content structure, and monitoring in place to measure what happens next.